Last Monday, AI developers Runway revealed Gen-3 Alpha, the latest in a growing number of generative AI content creation tools. With a series of videos demonstrating just how far AI generated text-to-video has come in only a little over a year, this latest release triggered the now familiar feelings of uncertainty, anxiety and excitement. However, the existential shock of Machine Learning entering into our lives is beginning to wane and the initial dread and fear have been replaced with a beige annoyance, like the feeling one has at the start of an HR training module; you know it’s coming, you know you’ll have to deal with it but you’d rather be engaging with anything else and you can’t wait till it’s over.

It’s true that humans are perennially adept at adaptation but this waning terror may also be down to the reality of what it feels like to interact with AI today; corny, corporate and clumsy. Terry Nguyen recently wrote this great piece following the evolution of attitudes towards AI through the lens of its portrayal in film that sums up this point really nicely.

Perhaps the boredom we feel towards AI is more towards the narrow applications promoted by the tech giants: AI as productivity tool, as a skills substitute for creative workers. - Terry Nguyen

I do think, however, that we’re at the precipice of a flashpoint moment. Just spending 5 minutes looking at what Runway’s new release is capable of brings a flood of ‘it’s so over’ feelings: its fidelity is just too good not to get freaked out. Whereas earlier generative AI models had telltale signs that quickly helped viewers flag what they were seeing as fake, these markers of inauthenticity are barely visible in Gen-3 Alpha. If no one told you that you were watching AI generated videos, you just wouldn’t be able to tell.

But the reason I wanted to write this piece wasn’t to add to the discourse around AI’s threats to jobs or its benefits or to talk about mankind optimizing itself into obscelesense. Rather, I want to interrogate a fact that doesn’t get a lot of attention: if AI does become the norm for creating video content, then the future of a visual medium lies in written language. These tools are text-to-video and thus create a hybridization of two distinctly different methods of communication, an amalgamation that could erode the intangible and indescribable essence of the visual arts.

Where is the subtext in text-to-video?

To me, the most curious and powerful aspect of visual mediums is that they communicate something unspoken; a feeling or an idea that the creator felt words to be incapable of carrying. Simply by existing these non verbal expressions ask the question “what did the artist mean by that?”, sending the viewer on an adventure of conjecture. Where does that mystery go when there’s a word behind every scene, painting or photo?

Words are fascinating: they swell with meaning to the point that they can sometimes get in the way of transmitting an idea or a feeling. Despite their clarity, they are at risk of being misused or misunderstood or of carrying baggage that makes interpreting them clumsy and effortful. They’re devices of the left brain - the logical, linear, rational side of the brain. Visual communications on the other hand can transcend semantics, each work becoming a subliminal language of its own in lieu of the use of words. They are unburdened ideas, feelings and intentions that speak to the intuitive, imaginative, emotionally receptive, right side of the brain.

Words can at best explain and at worst bludgeon an idea into the mind of the recipient and that’s assuming they are wielded with some level of intelligence and creativity. Things get more concerning when the reported decline in verbal intelligence is factored in and when innovation in language is stagnant. What I mean by the latter is rather than looking forward to find new ways to describe new ideas, we look backwards - referencing and repurposing words out of laziness, inarticulateness or lack of inspiration. How often have you heard post-, new- or -ification tacked onto old nomenclature to (often poorly) describe a new thing.

The pros and cons of prose and prompts.

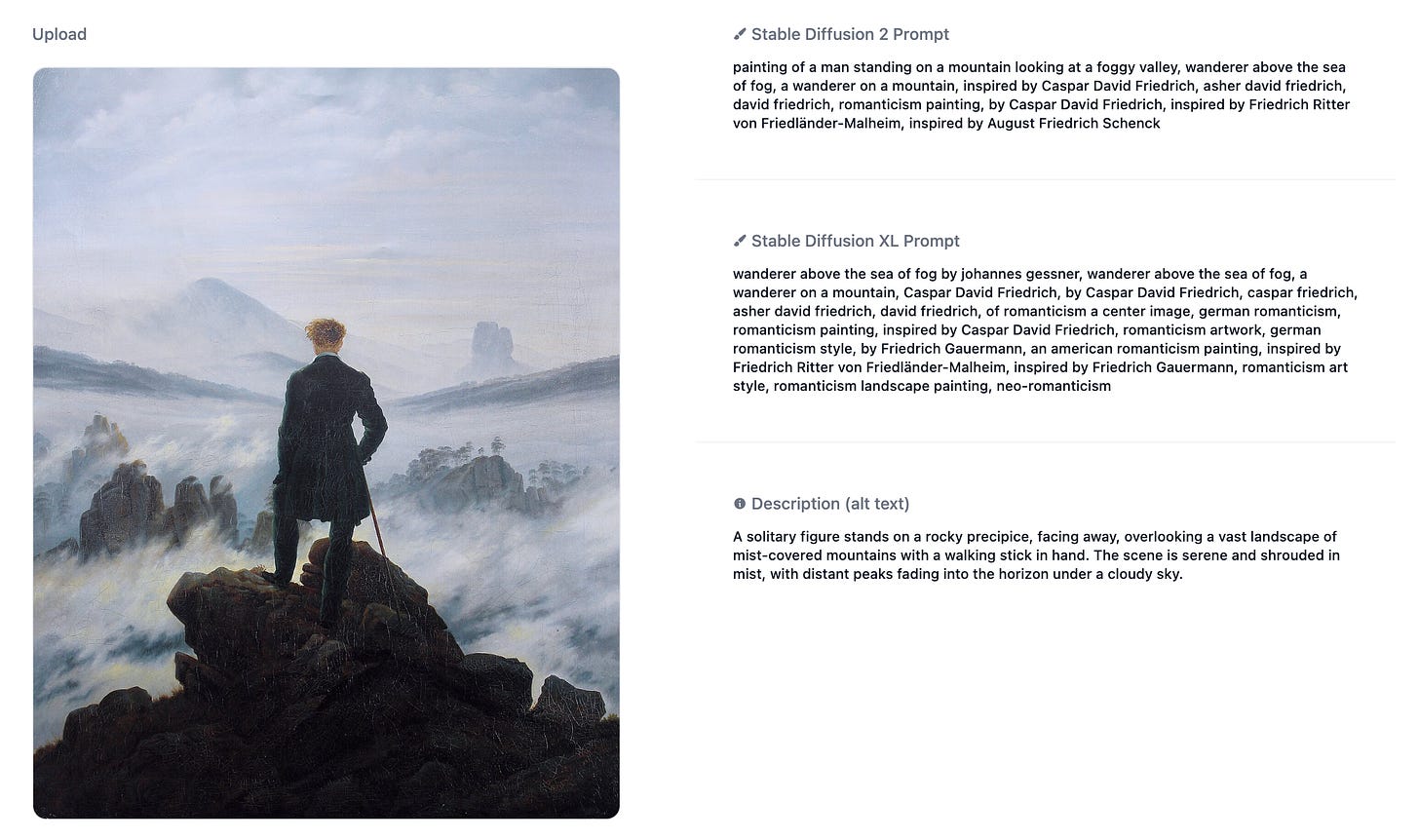

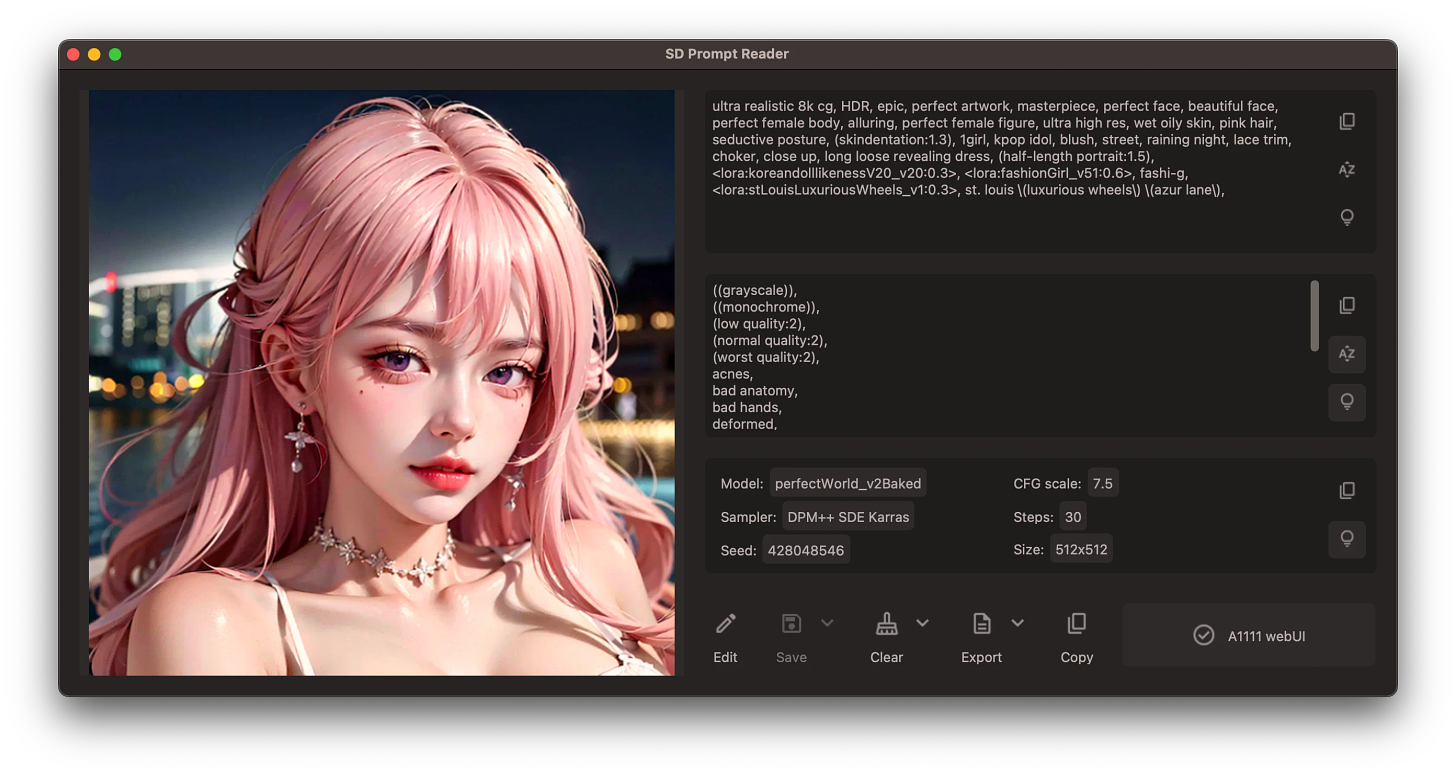

Beyond the erasure of subtext, beyond the stagnation of linguistic innovation, there’s another unsettling aspect of the language used to create images in this way; the prompt itself. Here’s an example of a prompt reverse engineered from an AI generated still image:

ultra realistic 8k cg, HDR, epic, perfet artwork, masterpiece, perfect face, beautiful face, perfect female body, alluring, perfect female figure, ultra high res, wet oily skin, pink hair, seductive posture, (skinindentation:1.3), 1girl, kpop idol, blush, street, raining night, lace trim, choker, close up, long loose revealing dress, (half-length portrait:1.5), <lora:koreandolllikenessV20_v20:0.3>, <lora:fashionGirl_v51:0.6>, fashi-g, <lora:stLouisLuxuriousWheels_v1:0.3>, st.louis \(luxurious wheels) (\azur lane),

And the image this prompt generated:

Far from descriptive and further from poetic, the prompt is a technical potluck of keywords, meta data, and code - doing nothing to conjure in the imagination the image that the AI eventually creates. Instead, it resembles something you’d find in the source code of a web UI. To really have the machine do your bidding, you have to have more than just basic knowledge of how it actually works (ie understanding how to separate keywords, knowing how to weight your prompt, knowing what a seed is, understanding negative prompts etc) otherwise it’s impossible to create “quality” content. There’s a technical prerequisite to using AI tools with any sort of proficiency, creating a divide between those who know AI and those who don’t, disproving the notion that these tools will democratize creativity.

Of course, non verbal visual arts aren’t going to die out because text-to-video exists but I think these points are worth talking about since our world is about to be flooded with AI generated content. Our priorities should be kept in check when these new types of technologies emerge, especially when we’re dealing with creativity since it’s really all there is to separate mankind from the automaton. Efficiency, optimization, automation - they all serve to increase productivity; but to what end?

The Spread

There’s a tension between our appetite for lo-fi, hyper authentic, unpolished realism in our content (see: content creators, docs, podcasts) and the quest for automation which currently (in the case of current text-to-X tools) produces such highly polished content that that trait is one of the biggest giveaways of its artificial origin.

Ultimately I feel that there needs to be human stakes at play for people to be truly invested in anything, so for as long as Gen AI excludes emotional implications it’ll remain an impressive gadget and not much more: useful and a change agent in certain aspects of daily life, but no more than the automated answering machine.

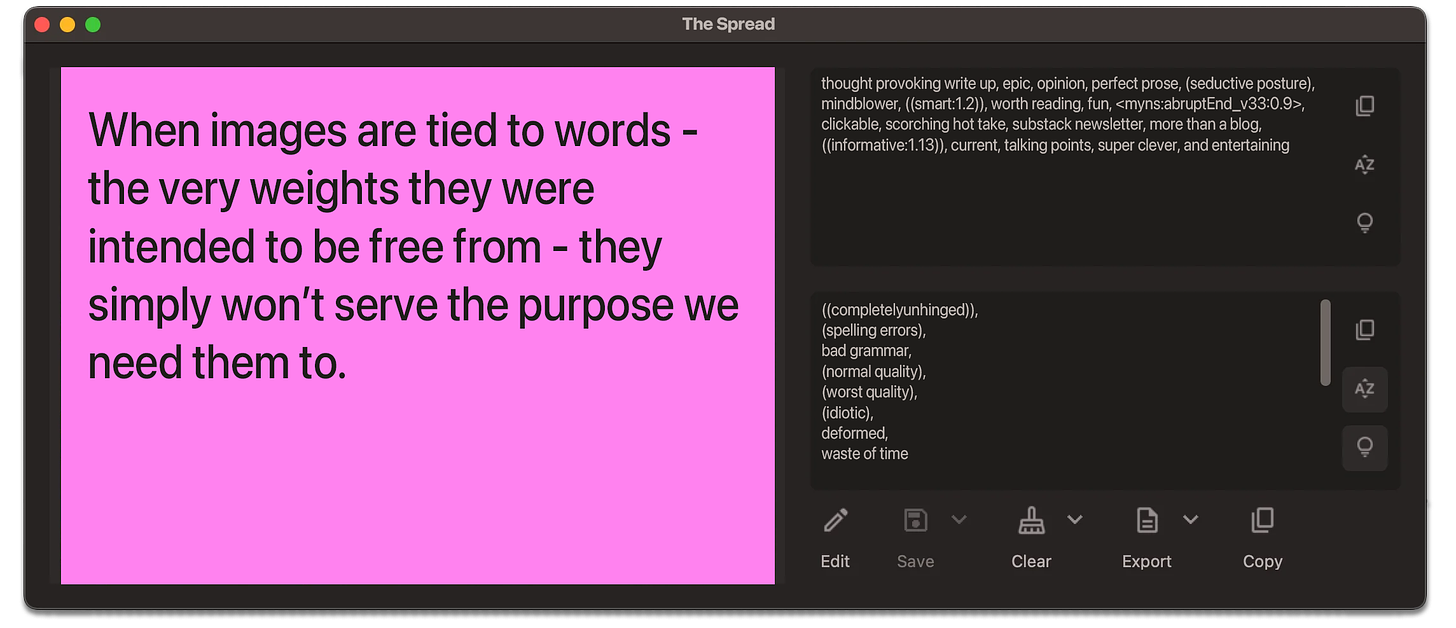

For all our rationality, we are predominantly emotional beings who created image-making to meet a need to express and communicate these emotions directly, without processing through language centers. When images are tied to words - the very weights they were intended to be free from - they simply won’t serve the purpose we need them to.

More Emulsion

Who would imagine: prompt engineers training AI to take their jobs.

Maybe there’s nothing to worry about, I mean we’re not even that far into this chapter of The Future Is Now and we already have AI hiring people to do the jobs the AI were created to replace people to do.

Handfart covers: non verbal communication at its finest.

And this widely seen and perfect statement:

Join the conversation on Discord.

Discuss without words, only pictures and vibes in the Mayonnaise Discord server.

This has been the 4th Mayonnaise dispatch, thanks for reading. Let me know what you think of it in the comments or tell me on Discord because it’s your feedback that helps improve this project. If you liked what you read, consider sharing the substack to people you think might like it too.